I’ve been testing WriteHuman AI for content writing and I’m unsure if the quality, originality, and tone are good enough for professional use. I’ve seen mixed opinions online and don’t know what to trust. Can anyone share real experiences, pros, cons, and whether it’s worth paying for long-term content creation?

WriteHuman AI review from someone who spent too long testing this stuff

WriteHuman is one of those “make your AI text look human” services. They name GPTZero directly in their marketing, so I paid more attention than usual and ran some tests of my own.

What I tested

I took 3 different AI-written samples and ran them through WriteHuman, then pushed the results into a couple of detectors:

- GPTZero

- ZeroGPT

The sample inputs were straight, unedited AI outputs. No extra cleanup, no manual tweaks.

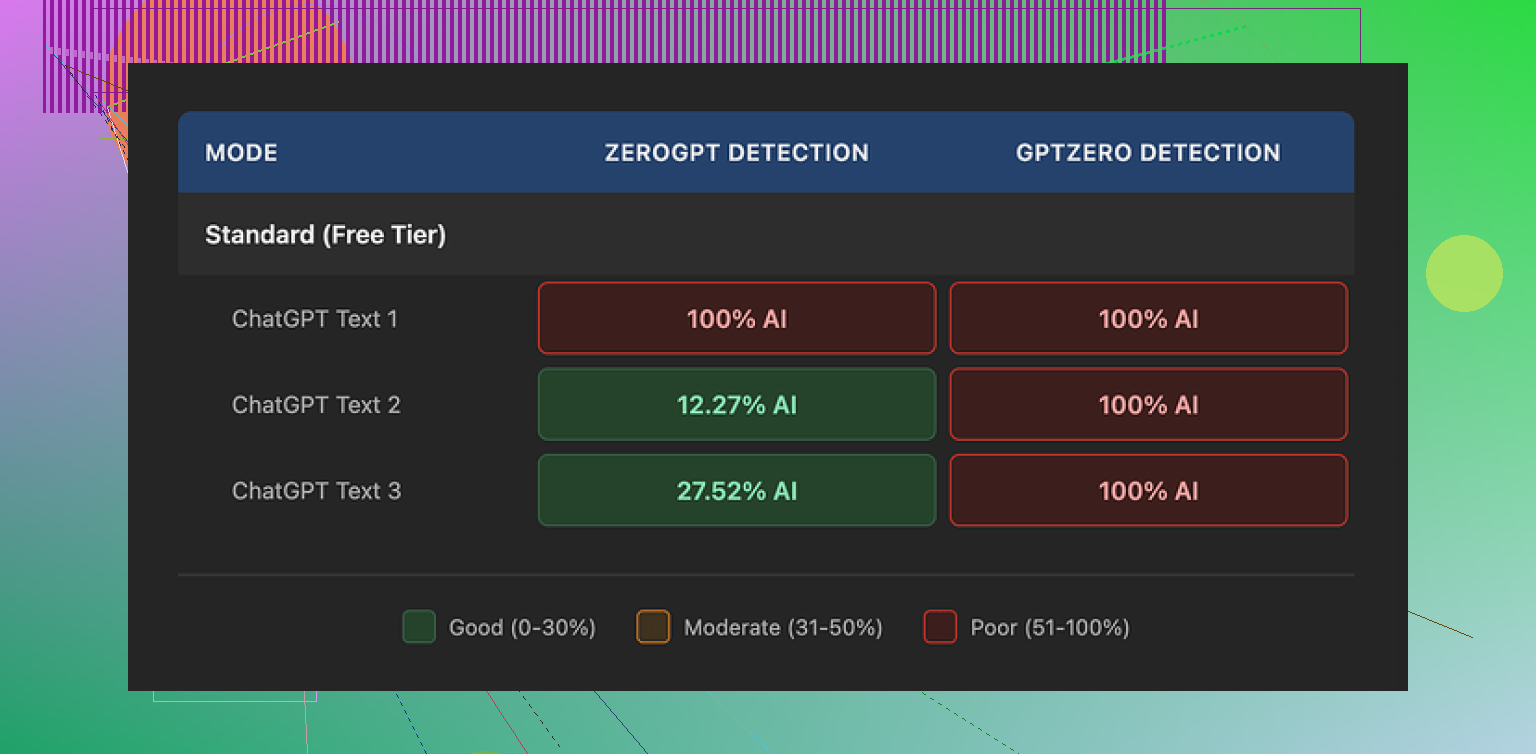

Detection results

GPTZero

This part was rough.

All three WriteHuman outputs came back as 100% AI on GPTZero. Not “mixed”, not “uncertain”. Full AI score on the exact detector they reference on their site.

So if your main problem is GPTZero at your school or company, based on what I saw, this tool does not solve that.

ZeroGPT

ZeroGPT was all over the place:

- Sample 1: 100% AI

- Sample 2: around 12% AI

- Sample 3: around 28% AI

So the same tool, same settings, same service, three different answers. You might get one piece that looks safe and the next one autolabeled as AI.

Screenshot from the test run

Writing quality and weird behavior

This part surprised me more than the detector scores.

The “humanized” text had:

- Abrupt tone changes inside the same paragraph

- A visible typo: “shfits” instead of “shifts”

Sure, that sort of mess might throw off some detectors a bit, but it also makes the text harder to use in real life. If you submit this for work or school, someone will notice the random style swings and obvious spelling error long before they check an AI detector.

Another screenshot from the output

Pricing, terms, and the part most people skip

Pricing

Their pricing felt high compared with other “humanizer” tools I tried:

- Basic plan starts at 12 dollars per month (billed annually)

- That Basic tier gives you 80 requests

- Paid plans unlock an “Enhanced Model” and more tone options

You need a paid plan if you want access to what they claim are better results.

Terms that matter

Two things stood out when I read through their terms:

- They plainly say they do not guarantee bypass of any AI detector.

- They have a strict no-refunds policy.

So if you pay, run your text, still get flagged as AI, and hate the results, there is no way to get your money back through them. You are eating that cost.

Data usage

Another thing that will bother some people.

Your submitted text is licensed for AI training by them. If you are sending in:

- Client work

- Academic writing

- Internal company docs

then you have to be okay with that content being used as training data. If you are not, there is no opt out, your only real move is to avoid using the service.

Alternative that worked better for me

From my own tests, I had better luck with Clever AI Humanizer here:

It performed better on detection, and there was no paywall in front of basic testing when I tried it. That alone made it easier to experiment without stressing about sunk cost.

If you are only looking at WriteHuman because of the GPTZero name drop on their page, I would not trust that claim without running your own samples first. My runs did not match the promise.

I’ve played with WriteHuman a bit for client stuff and I’d say it is “meh” for professional use if you rely on it alone.

My take, building on what @mikeappsreviewer shared but from a different angle:

- Quality and tone

- It tends to inject random tone shifts, like formal in one line, casual in the next.

- For blog posts or casual content, you can fix this with a light manual edit.

- For brand copy or client work, you will need to rewrite a fair chunk to keep the voice consistent.

- I saw more typos after “humanizing” than before, which looks forced. A few small errors help, but the pattern starts to feel fake if you read a lot.

- Originality

- It does not add much new info. It mostly rewrites structure and wording.

- If your base text is generic AI output, the result still feels generic.

- For professional use, you still need to bring your own research, examples, and POV. Think of it as a style filter, not an originality tool.

- Detectors and risk

- I got mixed results similar to what was described, but not identical.

- Some of my pieces slipped past GPTZero with “mixed” ratings, others got flagged as AI.

- ZeroGPT and others were inconsistent too.

- I would not rely on any humanizer if your main goal is “pass all detectors”. Treat anything you run through it as still risky for schools and strict companies.

- Workflow that worked better for me

What helped the most for professional content was:

- Start with AI draft.

- Rewrite key sections yourself, especially intro, conclusion, and any “list of tips”.

- Use WriteHuman or a similar tool on short chunks, not the whole article.

- Read the output out loud. Fix tone shifts, clunky phrases, and obvious “AI-sounding” patterns.

- Run your final version through a grammar checker and style tool, because WriteHuman output can be messy.

- Alternatives

If you want to experiment, Clever AI Humanizer is worth a look.

I got cleaner text and fewer random tone swings with it. For SEO content and blog posts, it felt easier to polish into something publishable. Still needed human edits, but less surgery.

Quick answer to your question

- For professional use on its own: I would not trust WriteHuman.

- As a small part of a manual workflow: it is OK, but not special.

- If your goal is solid, client-safe writing, focus more on editing, adding your own examples, and making the structure your own. Treat any “humanizer” as a helper, not the main solution.

Short version: if you’re already unsure about using WriteHuman for professional work, you’re right to be cautious.

Building on what @mikeappsreviewer and @waldgeist shared, here’s how I’d frame it from a more practical, “will this get me paid & not embarrassed” angle:

- Professional quality

For client work, brand copy, or anything with your name on it, consistency of voice is non‑negotiable. WriteHuman tends to:

- Nudge wording around without actually improving ideas

- Introduce tone drift where a paragraph stops sounding like the same person wrote it

- Occasionally add clumsy errors that feel like “fake humanization”

I actually disagree slightly with the idea that “a few typos help.” On cheap content mills, maybe. In professional settings, a random “shfits” type typo doesn’t make your text human, it makes you look sloppy. Good human writing is about clarity and intent, not forced imperfections.

- Originality

WriteHuman is a paraphraser with some stylistic seasoning. It does not:

- Add domain insight

- Create real examples from experience

- Fix shallow or generic structure

If you feed it bland AI text, you mostly get reworded bland text. For anything professional, your research, framing, and POV are still doing 90% of the heavy lifting.

- Detector paranoia

If your main question is “Will this keep school / compliance / clients from flagging AI?” then you’re already in a weak position.

- Results across detectors are inconsistent by nature, no tool will guarantee safety

- WriteHuman’s own terms saying “no guarantees” + no refunds should already tell you what you need to know

- The fact they lean on GPTZero in marketing while not actually beating it reliably is… not confidence‑inspiring

I actually think the bigger risk is a human reviewer reading the output and thinking: “This sounds oddly generic and off‑tone in spots.” That hurts trust more than any detector score.

- When it might be acceptable

WriteHuman is “fine” if:

- You’re writing low‑stakes blog posts or filler content

- You already plan to do a real edit pass yourself

- You treat it like a rough style filter, not a magic humanizer

Even then, I’d keep it to smaller chunks and never assume it’s ready to publish without reading it top to bottom. Out loud, if possible. You’ll hear the weird tone shifts immediately.

- A slightly different route

Instead of relying on a single “humanizer” to fix everything:

- Use any LLM to draft

- Rebuild the intro, conclusion, and key arguments yourself

- Inject your own examples, numbers, mini case studies

- Run a light humanizing/paraphrasing pass on only the stiffest sentences

- Finish with a serious manual edit and style check

If you still want a dedicated tool in that stack, Clever AI Humanizer is worth testing. It tends to produce cleaner, less janky text and is easier to work into a workflow where you’re still in control. Don’t expect miracles, but as a helper for making AI text sound less “robot lecture” and more “normal person,” it’s closer to useful.

So: for professional use on its own, I wouldn’t trust WriteHuman. As a minor, optional step in a mostly human‑driven process, it’s usable but not impressive. If your gut already feels “meh” about the output you’re seeing, that’s your answer: your clients or boss will feel the same.