I recently tried Monica AI Humanizer for some content polishing and I’m unsure if it’s actually improving readability or just rephrasing text in a way that might hurt SEO or sound unnatural. Can anyone share real experiences, pros and cons, and tips on using Monica AI Humanizer effectively for blog posts and web content so I can decide if it’s worth keeping in my workflow?

Monica AI Humanizer Review – from someone who tried to make it work

Monica AI Humanizer Review

I spent an afternoon messing with the Monica AI Humanizer, trying to get something usable past the common detectors. Short version, it did not go well.

Link for reference:

Monica AI Humanizer

Monica’s “one button, no options” problem

The humanizer is a single-click thing. You paste text in, hit the button, and that is it.

No controls for:

- tone

- strength of rewrite

- target style

- “risk” level or anything similar

That sounds simple, but in practice it locks you into whatever they think is “human.” If the output fails a detector, you have nothing to tweak. You hit the button again and hope for different luck.

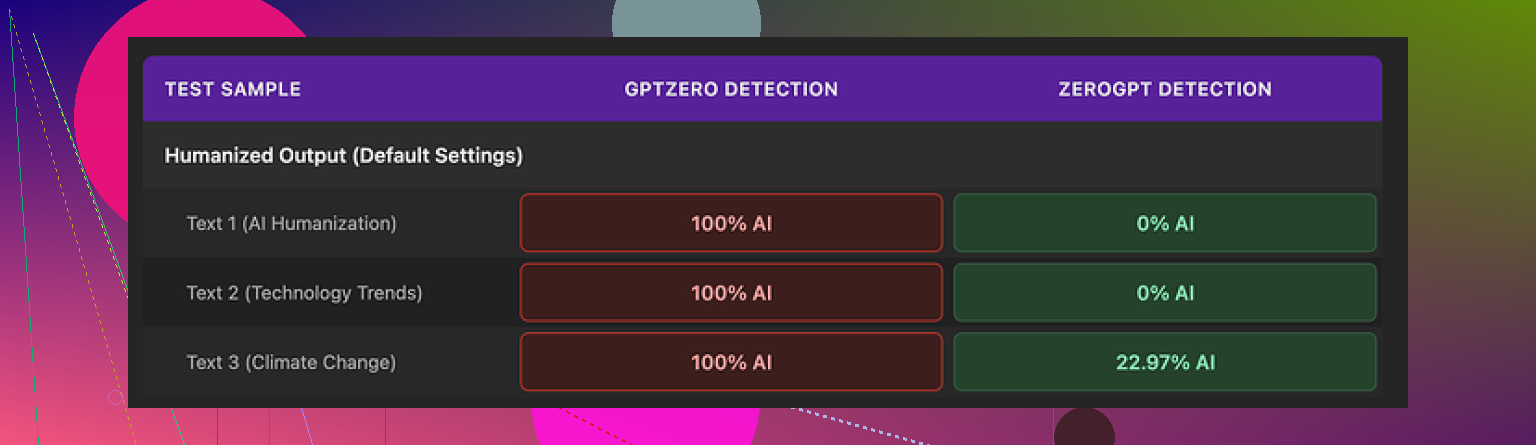

I ran the same base text through it several times, then pushed results through a couple of detectors.

Here is what happened:

- GPTZero marked every Monica humanized output as 100% AI

- ZeroGPT was less harsh

- two samples came back at 0%

- one landed around 23% “AI”

Once GPTZero started throwing 100% AI on all Monica results, the tool became basically useless for any situation where you do not know which detector your text will face. If your school, client, or company relies on GPTZero, this is a hard fail.

Quality of the writing

I scored the writing around 4/10 for real-world use.

Here is what I saw:

- It introduced new typos into clean text

- my source had no obvious mistakes

- output had a weird “Ubt” where “But” should be

- It sometimes fixed punctuation in a strange way

- added apostrophes in places that did not need them

- One output started with a random “[ABSTRACT” at the beginning, like it grabbed a fragment from some academic template

- It kept em dashes from the source and even sprinkled in more

For a tool that is supposed to make AI text feel more like something a person wrote, keeping and adding em dashes is the wrong direction. Detectors and teachers both tend to flag that style.

The overall vibe was: “AI text that tried to pretend it is not AI, and tripped over its own shoelaces.”

Pricing and where the humanizer sits in Monica’s ecosystem

The humanizer is not the main focus of Monica. It is part of a bigger bundle.

Pricing I saw:

- Pro plan from about $8.30 per month if you pay yearly

Monica itself is an all-in-one AI suite:

- chatbots

- image generation

- video related tools

The humanizer feels like an extra utility inside that package, not a flagship feature. If you already pay for Monica for other things, the humanizer is a “why not” extra you can click on and test.

If your only goal is to reduce AI detection or get safer text out of an LLM, paying for Monica purely for this humanizer does not make sense based on my tests.

How it compares to other options

I ran the same base content through another tool on the same site that hosts the review: Clever AI Humanizer at

On the same detectors and with the same starting text, Clever:

- produced more natural wording

- stayed closer to normal human mistakes instead of glitchy ones like “[ABSTRACT” and “Ubt”

- did better on detection checks across different tools

Key point, Clever did not require payment for what I tested. Monica did.

So from a practical perspective:

- if you already use Monica for chatbots or images, you might try the humanizer because it is there

- if your priority is AI detection avoidance, Monica’s humanizer is a poor choice, especially with GPTZero involved

- Clever AI Humanizer performed better for me and did not require a subscription for basic use

If you depend on your text passing detection for school, client work, or job stuff, I would not trust Monica’s humanizer as your main solution. It feels more like an optional side feature than a serious tool for that job.

I had a similar experience to you and to what @mikeappsreviewer shared, but my take is a bit different on the SEO and readability side.

Short version

For polishing content, Monica AI Humanizer feels risky for SEO and brand voice. It tends to rephrase too aggressively, adds odd quirks, and you have no control knobs to dial it in.

Here is what I saw in my tests.

- Readability

• It often inflated simple sentences into longer ones.

• It reused the same connectors over and over, which made paragraphs feel robotic.

• I also saw occasional random artifacts at the start of paragraphs, similar to the “[ABSTRACT” issue mentioned earlier.

For blog posts or landing pages, this kind of pattern hurts user experience. People skim. Long, slightly awkward sentences slow them down.

- SEO impact

SEO risk is less about “AI detection” and more about these points:

• Keyword drift. It sometimes removed or changed exact phrases that I wanted for search intent. For example, “best budget gaming monitor” turned into “top affordable monitor for games.” That looks harmless, but if your keyword research says the exact phrase matters, you lose alignment.

• Over-optimization in weird spots. In one test it repeated a key phrase three times in a short paragraph, which can look spammy.

• Structure changes. It sometimes merged short, scannable sentences into dense blocks. That hurts dwell time and CTR from snippets if you use those sections as meta descriptions or featured snippets.

For SEO content, I stopped trusting it and went back to doing a lighter pass in a standard LLM, then a manual edit.

- “Human” feel vs detectors

I disagree a bit with the idea that passing GPTZero or any single detector should be your main metric. Those tools flag lots of human text. For clients, what mattered more was:

• Does it sound like their brand voice.

• Does it keep domain-specific terms.

• Does it avoid obvious AI tics like repetitive phrasing or strange transitions.

Monica’s one-click workflow made this hard. You cannot set tone, risk level, or rewrite strength. You end up regenerating until something feels “good enough,” which eats time.

- Better workflow that helped

Here is what worked better for me:

• Use your main LLM to get a solid draft.

• Run it through a more controllable humanizer instead of Monica. Clever AI Humanizer did better here for me. The text felt closer to natural writing and less glitchy. It also tended to keep key phrases intact, which is safer for SEO pages. If you want to test it, try something like make your AI text sound more natural.

• Do a quick manual pass:

– Check your main keywords stayed in.

– Check headings still match search intent.

– Read aloud a few lines to catch awkward bits.

That last human pass took 5 to 10 minutes per article but saved me from odd artifacts and typos like “Ubt” that Monica output for some users.

- When Monica is “OK enough”

If you are:

• Cleaning short, low stakes content like internal docs or quick emails.

• Not targeting specific keywords.

Then Monica is usable as a fast polish tool. I would not rely on it for money pages, affiliate content, or school work that faces strict checks.

SEO friendly version of your topic line

“Monica AI Humanizer Review for SEO and Content Quality. Is it improving readability or hurting your rankings and sounding unnatural. Real user experiences, AI detection tests, and practical alternatives like Clever AI Humanizer.”

If your main goal is polished SEO content that sounds human, I would park Monica for now and build a workflow around something like Clever AI Humanizer plus a short manual edit.

I’m in the same camp as @mikeappsreviewer and @sonhadordobosque on the “one button, no control” issue, but for slightly different reasons.

For me, Monica’s humanizer is less of an SEO-helper and more of a content roulette wheel.

What actually happened when I used it

- Short, clear sentences turned into longer, clunkier ones. Readability score went down in Hemingway and similar tools.

- It sometimes shifted key phrases just enough to mess with keyword targeting. For informational posts that might be fine, but on commercial pages that kind of drift is annoying.

- The “human” flavor felt generic. Same connectors, same transitions, and a weird rhythm that starts to sound AI-ish when you read a few paragraphs in a row.

I’m not as worried as some people are about AI detectors. They are inconsistent and flag legit human text all the time. Where I do disagree a bit with others here: if a humanizer introduces typos like “Ubt” or random bracketed fragments, I don’t see that as “humanizing.” That is just low quality. Real human mistakes are more about style, tone, and uneven structure, not broken words and copy-paste artifacts.

SEO angle

In my tests, the main risks were:

- Keyword dilution: primary phrases replaced with close synonyms. Fine for casual posts, not fine if the page is targeting a specific query to rank.

- Weird repetition: sometimes it repeated a term inside one paragraph and then ignored it where I actually needed it, which is the worst combo.

- Layout issues: scannable bullet points and short sentences sometimes got merged into chunky paragraphs. That hurts skim readers and can reduce engagement.

So if your main concern is “Is Monica AI Humanizer improving readability or hurting SEO,” my answer is: it tends to hurt both once you scale beyond a few paragraphs.

Alternative that fit better in my workflow

When I swapped it out for Clever AI Humanizer, I noticed:

- The tool kept my core phrases intact more often, which is huge for SEO pages.

- The output read more like something I’d actually write on a decent day, instead of sounding like a thesaurus filter.

- Less cleanup. I still edited, but I was fixing nuance and tone, not mystery typos and random tokens.

If you want to see the difference yourself, take a chunk of your content, run it through Monica, then through a smarter AI text refinement tool, and compare which one you’d actually publish without embarrassment.

Where Monica is “ok-ish”

- Internal notes, drafts, low stakes stuff where ranking and brand voice are not critical.

- Quick rephrasing when you just want something “different,” not necessarily “better.”

For client work, authority content, or anything tied to revenue or grades, I would not rely on Monica’s humanizer as more than a rough first pass.

Cleaner, SEO friendly topic version you can actually use

Monica AI Humanizer Review for Content Creators: Does it Really Improve Readability or Just Risk Your SEO Rankings? Real user experiences, AI detection tests, and practical alternatives like Clever AI Humanizer for more natural and search friendly content.

Monica’s humanizer feels like a “black box spinner,” which is fine for quick throwaway edits and pretty weak for anything that has to rank or represent a brand.

A few angles that weren’t fully covered yet:

1. Readability vs “pattern smell”

What bothered me most was not only the lengthening of sentences (which others already flagged) but the rhythm. After a few paragraphs you can almost predict:

- Intro clause

- Connector like “However” or “Additionally”

- Mildly fancy verb

- Soft hedge at the end

Humans repeat patterns, but not that consistently. At scale this pattern smell is a risk for both user trust and any future classifier that looks at style rather than just token choices.

I actually disagree a bit with the idea that Monica is “OK for internal docs.” If a team adopts it heavily, you end up with a weird monoculture voice across everything, which can make it harder to tell which notes were written by who and when. For collaboration, that’s not great either.

2. SEO: not just keywords, but intent clarity

You mentioned worrying it might hurt SEO. The problem I saw is less “lost keyword” and more “blurred intent”:

- It softens strong commercial phrasing into info-style phrasing.

- It sometimes turns clear benefit statements into generic “nice to have” wording.

Search engines are getting better at mapping intent. If your “best budget gaming monitor” section suddenly reads like a casual buying guide instead of a comparison page, you may stay indexed but lose out on the exact intent you wanted to signal.

So I treat Monica’s output as potentially misaligned content rather than just imperfectly optimized content.

3. Where something like Clever AI Humanizer fits in

If your goal is: “I already have a decent draft and I just want it to feel less robotic without wrecking my SEO,” a more controllable tool is mandatory.

Pros I saw with Clever AI Humanizer compared to Monica:

- Tends to preserve main keyword phrases more faithfully.

- Less aggressive on sentence expansion, so scannability survives.

- Fewer random artifacts like bracketed fragments or glitchy typos.

Cons to be aware of:

- It can still smooth your writing so much that distinct personality fades if you do not revise after.

- On more technical content, it occasionally tries to “simplify” terminology that should stay exact.

- You still need a manual pass for brand voice and headings that match search intent.

So I see Clever AI Humanizer as a safer polishing layer, not a substitute for editing.

4. How I’d actually use these in a workflow

Different from what others suggested:

- I do not run whole articles through a humanizer in one go. I chunk by section so I can keep a tight grip on headings and primary keywords.

- I keep the original and the humanized version side by side and specifically check:

- Did my main phrase vanish or mutate too much?

- Did bullet lists stay as lists?

- Did the intro still directly answer the query?

Monica made this painful because it often restructured sections unpredictably. Clever AI Humanizer adjusted phrasing while mostly respecting structure, which is a crucial difference.

5. Quick answer to your core worry

- For SEO content and public-facing pages: Monica AI Humanizer is more likely to hurt readability and intent than help, especially if used on full pages.

- For small, casual pieces where search and voice do not matter much: it is usable, but there are smoother options.

- If you keep using a humanizer, treat it as a light stylistic filter plus manual edit, and lean toward something like Clever AI Humanizer that is less chaotic than what you and others reported with Monica.

If you test both, do it on a single money page, then run before/after through your own keyword checklist and a readability tool. That comparison will tell you more than any detector score.