I’m having trouble with an AI detector tool flagging my original writing as AI-generated. I need help figuring out why this is happening and how I can avoid false positives. Anyone else experience this or know how to solve it? Looking for advice or recommendations on trustworthy AI detection tools.

Yeah, those AI detector tools can be super frustrating, can’t they? Had my own original essay get flagged last semester, even though I wrote every word. From what I’ve seen, these tools look for certain patterns like repetitive sentence structure, lack of personal anecdotes, and “overly perfect” grammar. If your writing’s super formal, generic, or just too polished, that’ll sometimes trigger the detector.

So, here’s what I started doing:

- Add in more unique phrasing or slang.

- Break up long sentences and throw in a question or two.

- Drop in some personal stories or references, even just a quick “in my experience…”

- Avoid using the same sentence length over and over—vary it up!

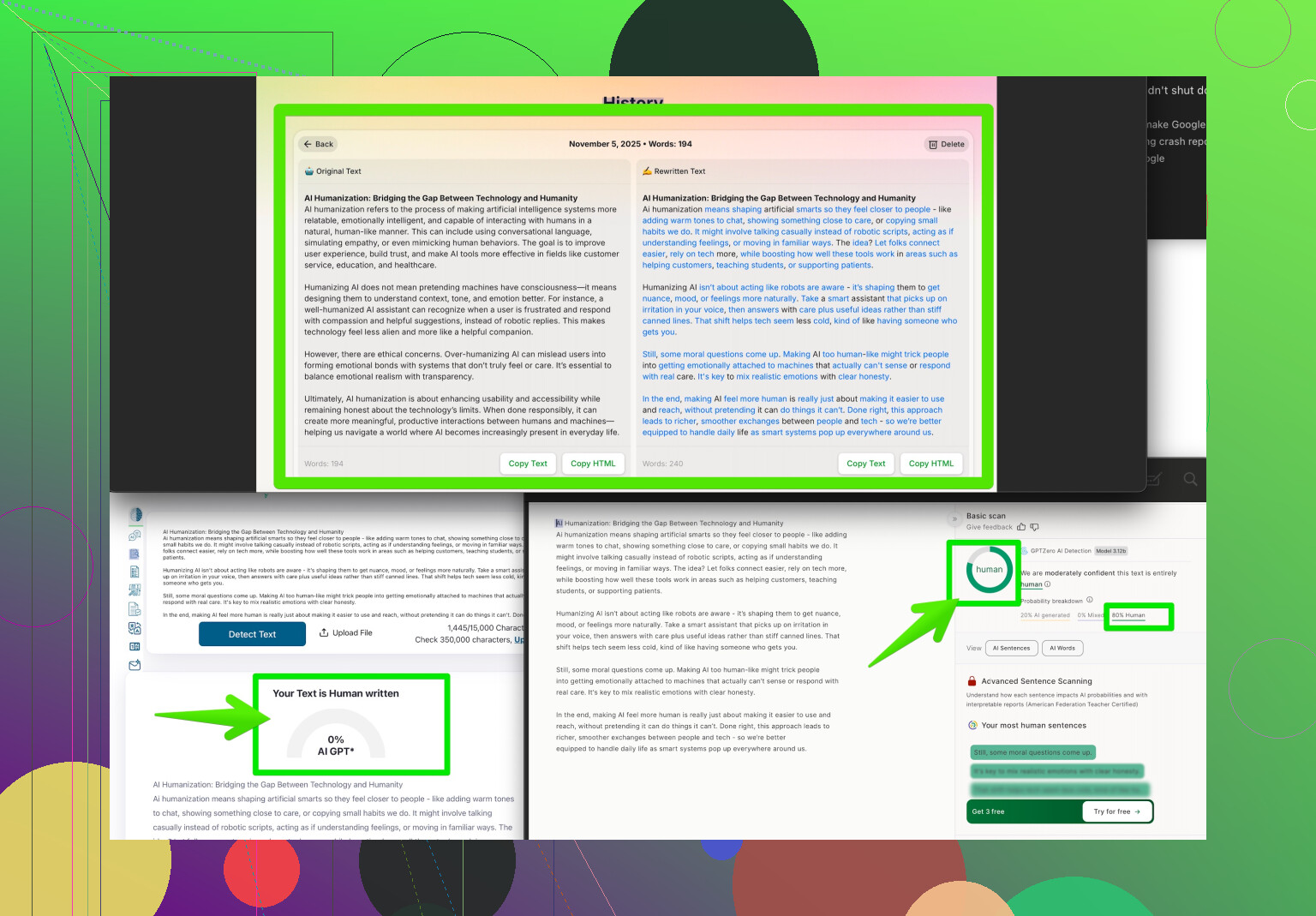

Also, before submitting anything, I run my stuff through an AI humanizer. Something like Clever AI Humanizer can really help your writing sound more natural and honest. It’s worth checking out if this problem keeps happening.

If you want more details or a step-by-step look, check out this resource: make your writing undetectably human. Helped me dodge plenty of false flags.

Anyone else got flagged after changing things up, or is it just me?

Not gonna lie, these AI detectors have been the bane of my existence lately. Got flagged twice for work I literally sweated over, and honestly, I feel like sometimes they just throw darts and hope something sticks. @kakeru shared some spot-on ideas (the “overly perfect grammar” thing is real), but I gotta say, I disagree with the whole making your writing “less polished” idea. Like, why should I have to dumb down my own stuff just to not set off a glorified robot snitch, you know?

Here’s what I’ve picked up digging through academic forums and tech spaces:

- Check your sources & references. If everything is bland or strangely uniform, AI detectors love that. Mix in ALT sources, quirky or less mainstream facts, or toss in something only a real person would know from actual lived experience.

- Look for the “predictability factor.” AI detectors often work off how predictable your word choices and sentence combinations are (entropy). If you’re using common collocations or the same transitions (“In conclusion,” “Furthermore,” etc.)—maybe swap them for something wilder or more human, like, “That being said,” or even, “Honestly, what’s up with that?”

- Tone shifts are your friend. Go from formal to conversational. Detectors generally trip up if you slide between tones because AI-outputs are usually “tonally stable.”

- Technical details freak them out (sometimes). If you can, throw in oddly specific info. If you’re writing a review about a product, mention how it creaks a little when it’s cold or how the buttons stick—stuff an AI would have trouble faking.

But honestly, this all feels like putting duct tape on a leaky boat. AI detectors are far from perfect—they’re just probability machines at their core. FWIW, I’ve used Clever AI Humanizer a couple times and it’s been sort of a magic wand for this problem. Not totally flawless, but probably the best you’ll get til these tools get smarter (or people just stop taking them as gospel).

Also, if you haven’t already, consider reading this Reddit discussion about AI detector workarounds and actual user experiences: Reddit advice for making AI writing more human.

Curious if anyone has actually had success arguing with a professor or employer about a false flag? Because so far, every convo I’ve had gets the “Well the computer says…” treatment, and it’s infuriating.

Quick hit: If your 100% original work keeps getting wrongfully flagged by AI detectors, you’re not alone (as others said, it’s weirdly common). I actually take a slightly different approach—think of it like debugging code, not “humanizing” for the sake of fooling a robot. Here’s my drill-down:

- Analyze your own text with a readability checker. Many AI detectors factor in Flesch-Kincaid and similar scores. If your stuff is reading like a legal document, break it up—but I’d avoid “forcing” casualness unless it matches your intended style.

- Reverse test with multiple detectors, not just one. Each tool flags different things. I’ve used GPTZero and Originality.ai, and their results rarely match. Cross-reference! You’ll spot odd inconsistencies.

- Meta tags & doc properties sometimes contain telltale signatures that get flagged (more on the software side, but it happens if you switch between editors or use tools like Grammarly). Scrub those before submitting.

- Run your writing through a diff tool if you edit a ton between drafts. This helps you notice if you’re copy-pasting your own work or rewording in a way that turns “you” into “AI-you” by accident. It’s more common than you’d think, especially after spellchecker or paraphrase tool overuse.

- Clever AI Humanizer: Yes, it helps, especially for smoothing out weird “AI markers” like uniformity or repetitive phraseology. Pro: does what it says—genuinely effective for casual or narrative writing, not just essays. Con: heavy use can flatten out your own authentic quirks or make things feel a bit generic if you don’t review the output. It’s better than nothing (and ime beats some rivals like Quillbot in this niche), but always double-check the end result.

I’ll echo the others: Don’t just “dumb it down,” and don’t assume a flagged doc means you did anything wrong. Try for transparency if possible—document your writing process, drafts, and sources in case you have to prove it’s yours. No tool, not even Clever AI Humanizer or competitors mentioned earlier, is perfect. Until the tech gets smarter, think like an engineer: test, tweak, repeat. Good luck.