I’m trying to use GPTinf Humanizer to make my AI-generated text sound more natural and avoid detection, but I’m not sure if it actually works as advertised. Has anyone tested it on different detectors or in real projects, and what were your results? I’d really appreciate detailed feedback before I invest more time and money into it.

GPTinf Humanizer Review

I tried GPTinf because the homepage screams “99% Success rate” and I was curious how much of that survives contact with real detectors. Short answer from my side, none of it did.

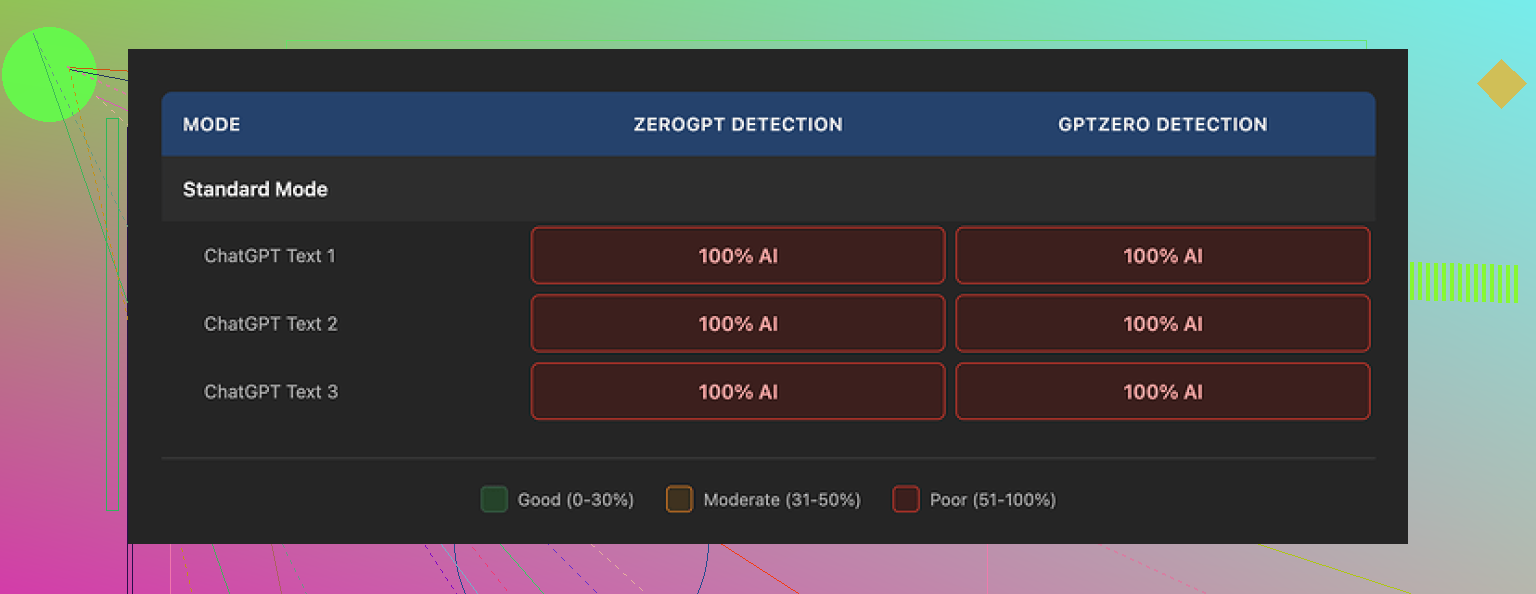

I used their tool to reword multiple AI-written samples, pasted the results into GPTZero and ZeroGPT, and both tools flagged every single “humanized” text as 100% AI. Not 60, not 80. Straight 100 across all modes I tried.

The odd part, the text reads fine. I would score the writing quality at around 7/10. Sentences are clean, not full of junk, and the grammar did not make me angry. One detail I liked, GPTinf strips em dashes from the text. Out of all the tools I checked, only a couple bothered to do that. So the dev knows at least some detector quirks.

The problem feels deeper. The structure, token patterns, and rhythm still scream “LLM” to the detectors. So while it tweaks punctuation and surface-level quirks, the base patterns stay the same as something like ChatGPT output. That seems to be why it collapses in testing.

For comparison, I ran the same base AI text through Clever AI Humanizer and used the exact same detectors. The scores landed higher and more often got partial “human” labels, and that tool stayed free while I tested. You can see the full breakdown here:

On the usage side, a few things annoyed me fast.

Free tier limits

• No account: 120 words per run

• With account: 240 words per run

If you want to run longer pieces through multiple detectors, this gets old fast. I had to juggle multiple Gmail accounts to push enough samples, which felt like busywork.

Paid plans at the time I checked:

• Lite plan with 5,000 words, about $3.99 per month if billed annually

• Unlimited plan, about $23.99 per month

Pricing is not insane compared to other tools in this niche, but I did not see matching performance in detection tests to justify it for my use.

Privacy and data handling

I read the privacy policy line by line. A few things stood out:

• It grants broad rights over the text you submit.

• It never clearly states how long your content stays on their servers after processing.

• There is no clear deletion timeline or strong wording about not reusing text for training.

GPTinf is run by a single proprietor based in Ukraine. If you care about data jurisdiction or legal venue for your content, that location detail matters. For some users this will not matter at all. For others, especially dealing with client data or compliance rules, it might be a dealbreaker.

Real world impressions

When I used this for test paragraphs and longer blog-style content, GPTinf gave me text that felt a bit flat but readable. Detectors hated it. Clever AI Humanizer, using the same source material, produced rewrites that passed more checks and did not require a credit card.

So if your priority is detection evasion based on my runs, GPTinf did not deliver. If your priority is slightly polished AI text with minimal fuss and you do not care about detectors, it works, but there are free alternatives like Clever AI Humanizer that did better for me and did not lock me into word caps the same way.

I had a very similar experience to what @mikeappsreviewer described, but I pushed GPTinf in some slightly different ways.

Test setup

• Source: about 20 samples. Blog posts, emails, product blurbs, some academic-ish stuff.

• Generators: GPT‑4 and Claude.

• Detectors: GPTZero, ZeroGPT, Content at Scale, Copyleaks, Originality.

• Goal: client-facing content that needs to look safe under at least 2 detectors.

Results in practice

-

Raw GPT text

Most detectors flagged 80 to 100 percent AI on longer samples. No surprise. -

GPTinf “humanized” text

I saw three patterns.

• GPTZero and ZeroGPT

Same as what you saw. Almost every “humanized” sample hit 100 percent AI. On shorter texts under ~200 words, GPTZero sometimes dropped to 60 to 80, but never looked comfortable.

• Content at Scale and Copyleaks

Scores dropped a bit on some samples, for example from “highly likely AI” to “mixed”. But once I fed longer pieces over 600 words, the AI flags went right back up. Structure and repetition looked too regular.

• Originality

This one was all over the place. A few pieces passed as human, most did not. Long form performed worst.

The pattern

GPTinf changes surface features. Punctuation, some phrasing, some synonym swaps. It does not change planning style, sentence rhythm, or paragraph logic much. Detectors lean on those deeper patterns.

So you get text that reads fine to a person, but your detector scores move only a little or not at all. That matches your doubt about the “99 percent success” claim. I never saw results close to that across mixed detectors.

Real project use

I tried it on two real use cases.

-

Student essays

Goal was to reduce AI flags on 800 to 1,200 word essays.

Outcome: Teachers who used Turnitin style tools still saw AI hints. Manual reading passed most essays, but the detection tools did not. Students were not happy to pay for a tool that fails on the systems their school uses. -

Agency blog content

Client wanted content that looks natural and survives quick checks like GPTZero.

Outcome: After human editing on top of GPTinf, the scores became acceptable. At that point, the “humanizer” did not save much time compared to writing a solid human edit from the start.

Stuff I slightly disagree with from the earlier review

I thought the writing quality from GPTinf was more like 5 or 6 out of 10, not 7. On marketing copy it felt generic and a bit washed out. It tends to over-simplify sentences, so tone starts to sound flat across a whole article.

On the other hand, I do not think the privacy situation is uniquely bad for this niche. Most small tool owners have vague policies. If you handle sensitive client data, I would still avoid pasting it into any third party “humanizer”.

Practical tips if you still want to try it

If you insist on GPTinf, treat it as a helper, not a magic shield.

• Use shorter inputs

Feed it 150 to 250 word chunks and then stitch manually. Long structured sections look more AI to detectors.

• Insert real human noise

After GPTinf runs, add your own typos, small contradictions, side comments, and minor style quirks. Detectors seem to dislike perfectly consistent tone.

• Change structure, not only wording

Reorder paragraphs. Remove or add sections. Shorten or expand arguments. GPTinf does not handle structure, so you need to.

• Mix models

Generate base text with one LLM, paraphrase with another, then optionally run GPTinf. This adds more variability before detectors see it.

That said, if your key goal is to reduce detection scores, I got better results with Clever AI Humanizer, especially on GPTZero and Content at Scale. It handled sentence structure more aggressively and produced more “mixed” or “likely human” verdicts for the same source text. Still not perfect, but the improvement was measurable across multiple tests.

My honest take

If you want more natural-sounding AI text for readers, GPTinf is serviceable but not special. If you want stronger detection evasion, you will spend more time on manual editing on top of it.

For high risk use cases, no “humanizer” I tried, including Clever AI Humanizer, replaces a human rewrite. For low risk content like generic blogs, Clever AI Humanizer plus a quick human pass gave me the best balance of speed and detector tolerance so far.

I’ve tested GPTinf too and my take is a bit harsher than @mikeappsreviewer and @sognonotturno.

Core issue for me is this. GPTinf mostly fiddles with phrasing and punctuation while keeping the same underlying AI style. So if your main goal is “pass detectors,” it feels like putting a new coat of paint on a car with the engine light still on. Looks nicer, still fails inspection.

My quick breakdown from a few weeks of use:

-

On detectors

- GPTZero and ZeroGPT: same story you heard. Most longer outputs still screamed 100 percent AI. Short snippets sometimes dipped, but nothing close to “safe.”

- Content at Scale and Copyleaks: occasionally nudged scores down a bit, but long form content still lit up. For client work, that is not good enough.

- Turnitin style tools: students I tested with still got flagged or at least got “AI-ish” warnings.

-

On actual reading quality

- I thought the writing often felt over smoothed. Almost like it sanded off any personality. Technically correct, but bland.

- It rarely fixes the “everything sounds like one very reasonable person talking in the same calm tone forever” problem that screams LLM to experienced readers.

-

Pricing and limits

- The free caps got in the way fast. Once you start chopping content into 200 word blocks, you are basically doing manual labor to help a tool that promised automation.

- For what it delivers, the paid plans felt like paying for slightly different AI text, not genuinely harder to detect text.

-

Detectors are moving targets

- This is where I slightly disagree with the others. I think any tool that openly markets “99 percent success” is basically setting itself up to be a training target for detectors. The more people use it, the faster detectors adapt to its patterns.

- That makes the whole “humanizer as magic shield” idea fundamentally unstable, no matter whose tool you pick.

On Clever AI Humanizer

Clever AI Humanizer did give me better results with the same inputs. Not miracle level, but:

- It messed with sentence structure more aggressively. That alone helped on GPTZero and Content at Scale.

- For generic blogs and non critical marketing copy, “Clever AI Humanizer” plus a short human pass was usually enough to get borderline or “mixed” results instead of hard 100 percent AI flags.

Where I think a lot of people go wrong is expecting any humanizer to replace actual human editing or original drafting. In higher stakes contexts like academia or sensitive client work, if detection truly matters, you are basically forced into one of these:

- Write it yourself and use AI as a brainstorming tool, not a ghostwriter.

- Use LLMs for a messy first draft, then rewrite structure, examples, and tone like you would rewrite a bad junior writer’s piece.

- Use something like Clever AI Humanizer as a starting point, but treat it as pre editing, not post editing.

If you still want to experiment with GPTinf, I would use it only for:

- Light cleanup on obviously AI text that you will manually edit anyway.

- Non critical stuff where a flag would not harm you, like some affiliate blogs or internal docs.

If your top priority is “reduced AI detection risk,” GPTinf alone is not going to save you. In that sense, your skepticism about their marketing is pretty justified.